Many ML candidates assume the interviewer is evaluating how advanced their machine learning knowledge is.

In reality, the interviewer is often evaluating something simpler:

Can this person explain technical ideas clearly enough to work with other humans?

A surprising number of ML interviews become difficult not because the candidate lacks knowledge, but because their explanation becomes impossible to follow.

This happens especially often in English.

Candidates start with a simple idea like overfitting or gradient descent, then suddenly jump into equations, edge cases, research terminology, and disconnected details.

The interviewer loses the thread.

What strong ML explanations feel like

Strong interview explanations usually feel layered.

The interviewer understands the big idea first, then the details.

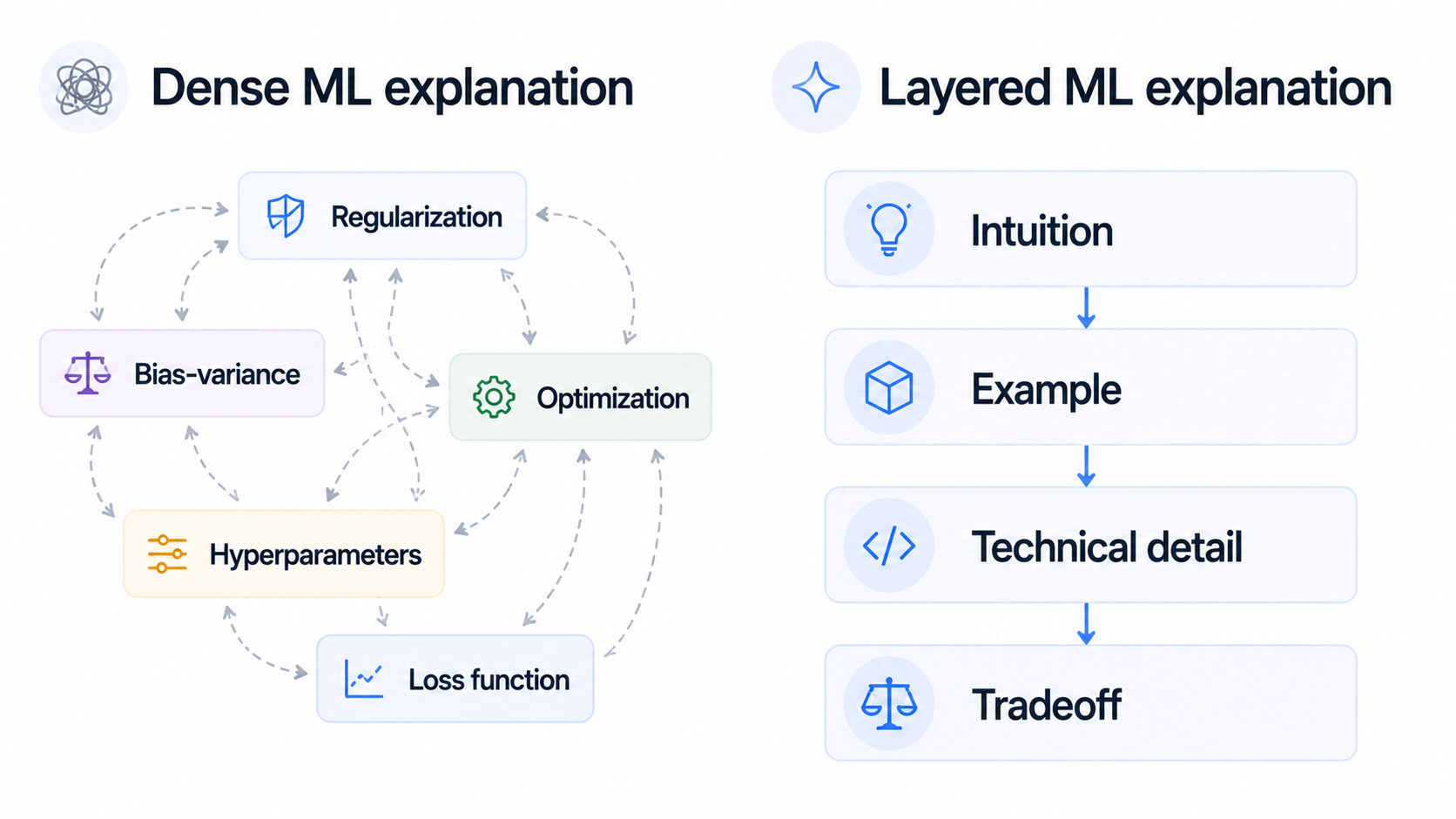

Dense explanation

- Starts with theory immediately

- Uses undefined jargon

- Adds details before context

Layered explanation

- Starts with the intuition

- Defines terms clearly

- Adds complexity gradually

Key insight: In ML interviews, sounding understandable is often more valuable than sounding maximally technical. Interviewers usually trust candidates more when they can explain complexity simply.